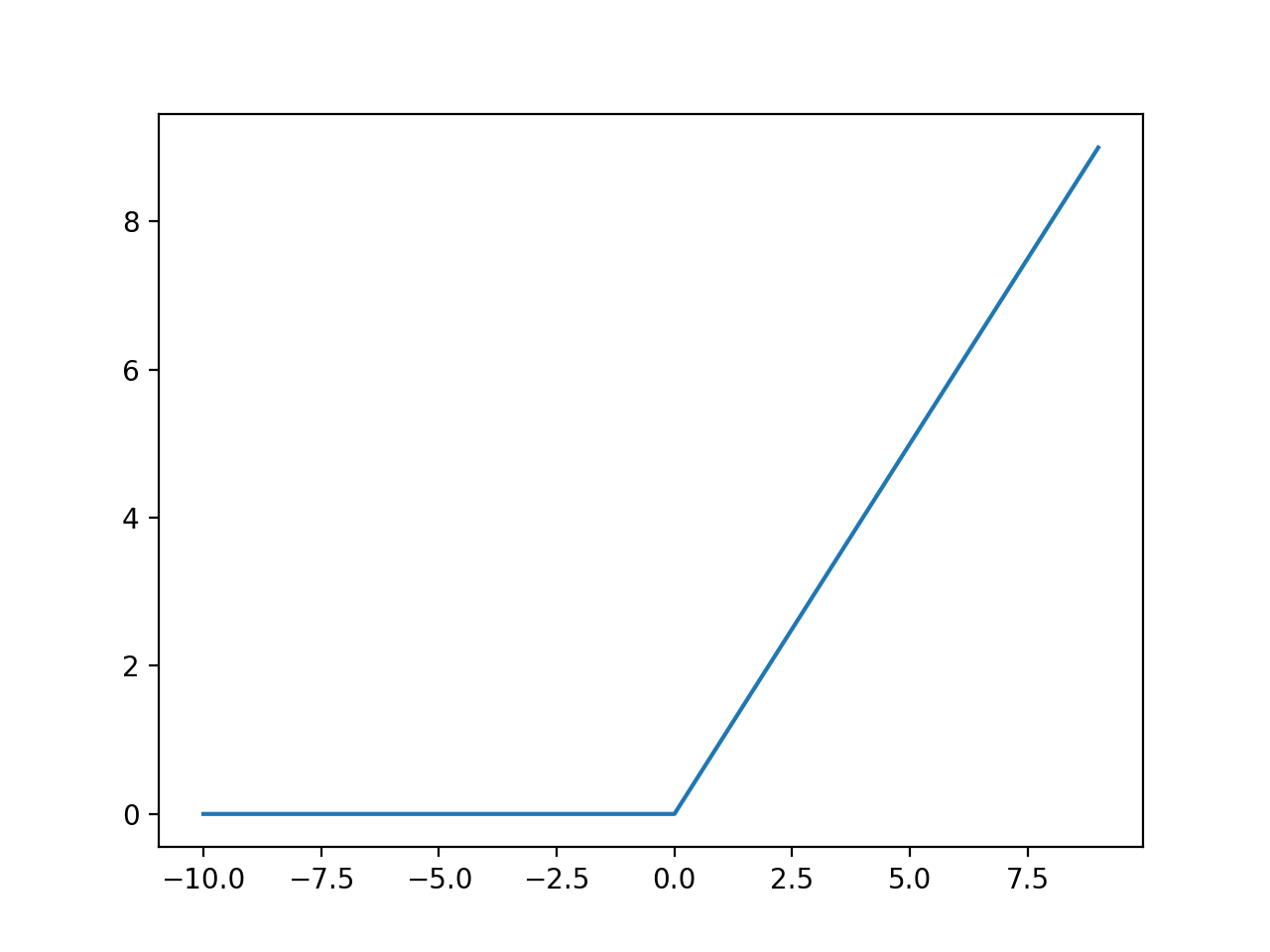

Swish activation function derivative11/10/2023  All layers of the neural network will collapse into one if a linear activation function is used.It’s not possible to use backpropagation as the derivative of the function is a constant and has no relation to the input x.However, a linear activation function has two major problems : The input fed to the activation function is compared to a certain threshold if the input is greater than it, then the neuron is activated, else it is deactivated, meaning that its output is not passed on to the next hidden layer. Binary Step Functionīinary step function depends on a threshold value that decides whether a neuron should be activated or not. Now, as we’ve covered the essential concepts, let’s go over the most popular neural networks activation functions. 3 Types of Neural Networks Activation Functions It’s because it doesn’t matter how many hidden layers we attach in the neural network all layers will behave in the same way because the composition of two linear functions is a linear function itself.Īlthough the neural network becomes simpler, learning any complex task is impossible, and our model would be just a linear regression model. In that case, every neuron will only be performing a linear transformation on the inputs using the weights and biases. Let’s suppose we have a neural network working without the activation functions. In the feedforward propagation, the Activation Function is a mathematical “gate” in between the input feeding the current neuron and its output going to the next layer.Īctivation functions introduce an additional step at each layer during the forward propagation, but its computation is worth it. The input is used to calculate some intermediate function in the hidden layer, which is then used to calculate an output. □ Feedforward Propagation - the flow of information occurs in the forward direction. When learning about neural networks, you will come across two essential terms describing the movement of information-feedforward and backpropagation. The choice depends on the goal or type of prediction made by the model. However, the output layer will typically use a different activation function from the hidden layers. □ Note : All hidden layers usually use the same activation function. It’s the final layer of the network that brings the information learned through the hidden layer and delivers the final value as a result. The hidden layer performs all kinds of computation on the features entered through the input layer and transfers the result to the output layer. They provide an abstraction to the neural network. Hidden LayerĪs the name suggests, the nodes of this layer are not exposed.

Nodes here just pass on the information (features) to the hidden layer. No computation is performed at this layer. The input layer takes raw input from the domain. Each of them is characterized by its weight, bias, and activation function. In the image above, you can see a neural network made of interconnected neurons.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed